MAIN IDEA:

The main idea of this book is to relate the new developments in author’s work when he found a way to obtain funding from IARPA and start Good Judgment Project, which includes systematic analysis of forecasting processes using thousands of volunteers. This project allowed author to identify a group of successful forecasters, analyze how exactly they work, and produce a pretty good description of successful patterns of behavior and processes.

DETAILS:

- An Optimistic Skeptic

Author starts with the point that we all are forecasters trying to predict future events with different results. He refers to his earlier work on quality of forecasting conducted back in the 1980s that demonstrated very poor quality of forecasts by experts and pundits that become source of pessimism about this popular activity. The new research that he discusses in this book provides some reasons for more optimistic view on possibilities of improvement in forecasting quality. This research called Good Judgment Project (CJP) is sponsored by IARPA and based on formal forecasting and result analysis processes done by mainly self-selected volunteers. These processes allowed identify a group of super forecasters who were able to beat control group by 60-70%. The final part of the chapter refers to recently developed AI capabilities that promise in conjunction with humans achieve significant improvement in the quality of forecasts.

- Illusions of Knowledge

This starts with some examples of mistaken medical diagnosis and correspondingly forecast, then expand it to discuss human blindness to facts and multitude of historical cases supporting the notion of its severity and hugely negative consequences such as common medical practice of bloodletting that led to doctors’ killing multitude of patients, probably including George Washington. The contemporary result of growing understanding of deficiencies in human thinking processes led to development of the new procedures in medicine and resource allocation to analysis of the process of thinking. The final part discusses popular notion of “blink” vs. necessity of systematic thinking and Kahneman’s work on the fast and slow thinking.

- Keeping Score

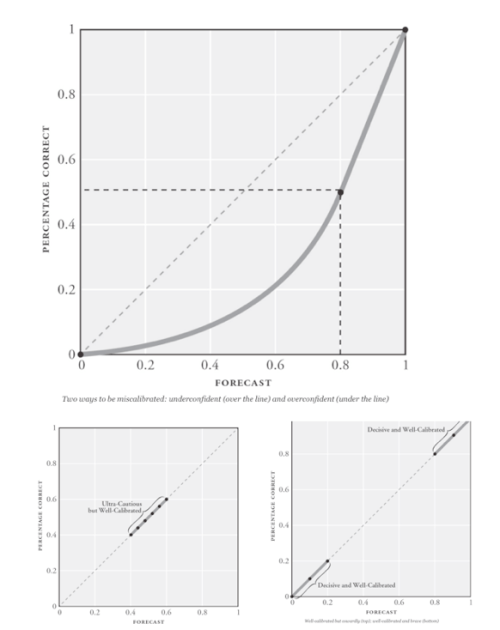

This chapter is about difficulties of keeping score of forecasts. It uses example of Steve Ballmer who famously made statement seemingly underestimating iPhone potential. The actual statement nevertheless had enough wiggle room to spin it as pretty good forecast. After that author discusses an interesting example of important forecast failure – Soviet Union dissolution. After that he goes to some technical aspects of forecasting: over / under confidence, its decisiveness and calibration:

Author also establishes here the main criteria for quality of forecast: Brier score with correlations: 0 perfect, 0.5 random, and 2 perfectly false forecasts.

Author also returns here to results of his 1980s study and it’s findings about impact of personality type on quality of forecast with foxes being much better than hedgehogs. The final part is about “wisdom of crowds” aggregation that sometime drastically improves forecast if diversity of views good, meaning wide enough to achieve healthy cancellation of extremes.

- Superforecasters

Here author reviews finding from his recent project with IARPA that allowed identify individual with significantly better forecasting results than average. The bulk of discussion here is related to differentiation between luck and skill, which author mainly does by tracing regression to the mean, the absence of which indicated prevalence of skill over luck.

- Supersmart?

This is about notion of smartness or intelligence and how it is defined via IQ testing or Fermi questioning (number of piano tuners in Chicago). After that author applies these notions to the real live forecasting question whether it will be found or not that Israelis poisoned Arafat. Here is an interesting concept of Active Open Mindedness (AOM) developed by Jonathan Baron based on agreement/ disagreement with questions:

- Superquants?

This is about application of math and statistics to forecasting and need for preciseness (use of % in forecast rather than verbiage: likely/unlikely). Here is a typical use of verbiage that allows granular, but not precise analysis:

At the end of chapter author looks at difference between probabilistic and deterministic approaches.

- Supernewsjunkies?

This chapter is about successful forecasters from the project, their methods and cases of over or under forecasting of actual events. It is also a bit of discussion about Bayesian equation and how it approves forecast when the new information is being taken into account.

- Perpetual Beta

This chapter is very valuable because author not only discusses need of practical experience rather than theoretical knowledge in forecasting, necessity of failure, and imperative of thorough analysis and adjustment, but also provides features of model superforecaster derived from his experience:

- Super teams

Here author discusses value of teamwork in forecasting initially questioning validity of team approach in such deeply intellectual activity as analysis and forecasting. The conclusion is that generally team is more cumbersome, but results in accuracy about 23% better than the same people individually.

- The Leader’s Dilemma

This chapter starts with very interesting deviation into military history discussing little known quality of German military that made it so formidable power during two World Wars: culture of independent thinking and consistent encouragement of decision making at the lowest level of hierarchy if it is consistent with the highest level of competence relevant for this decision. Author contrasts it with rigid and hierarchical decision making in American military and how difficult it is to overcome. One important lesson in this is that even if German military was clearly force for evil, it should not prevent good analyst from learning whatever strengths it had and apply this knowledge.

- Are They Really So Super?

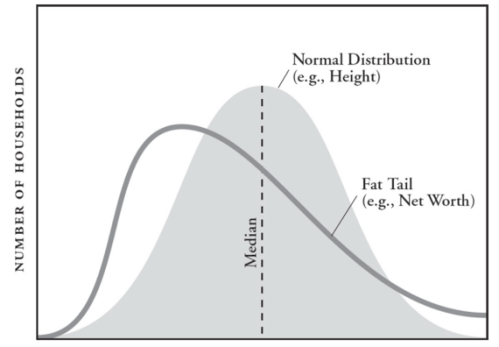

Here author discusses tendency of people to ignore information that contradicts their preset opinions and stress the one that supports it. He uses recent political development on Middle East to demonstrate how bureaucracy often fails anticipate changes in situation and how black swan events tend to pop-up elsewhere. Another point author makes is that failure to collect real data often leads to mistakes that could be easily avoided if analysts apply real data rather than preconceived ideas. He provides a nice example with income distribution when formal application of normal distribution leads to incorrect estimate of possibility for somebody to be a billionaire as one in trillions, when in reality Fat Tail actual income distribution makes it much more probable:

- What’s Next?

This is an interesting take on future of forecasting where author correctly identifies objective of a forecast as to promote well being of forecaster with accuracy being a useful, but not ultimate parameter in achieving this objective. As example author discusses political events of Romney election and difficulties of implementing Evidence based medical policies. In both cases objective data pushed aside when they undermine well being of political analysts in the former case and medical profession in the latter. Interestingly, author also discusses vulnerability of all objective facts and numbers to manipulation, but that it is still best methodology that we have. The author also makes a point about post factum situation when experts often manage to spin results to such extent that makes failed forecast justifiable and, somewhat laughably, successful.

Epilogue:

The final world is that superforecasters such as one of participants of Good Judgment Project Bill Flack, who is often right about future events, and “strategic thinkers” like Tom Friedman, who was consistently wrong, in reality are complimentary because people like Friedman are good at raising question, even if they typically could not provide meaningful answer, leaving this job open for superforecasters like Bill.

MY TAKE ON IT:

I think it is a great book that significantly expands on initial work of author that demonstrated low levels of ability of typical experts and pundits to correctly forecast future events. The success in trying identify and somewhat formalize the method of effective forecasting is very important and may in the future lead to creation of some independent sources for analysis and forecasting of political, economic, and legislative decisions. Nevertheless, I personally believe that world is way too complicated for anybody or any combination of humans and computers to be able correctly forecast future events so the best way to prosperity and good outcome is to minimize need in complex high level decision making, by decreasing role of governments, big corporation, and legislature in everyday live pushing decision at as low level as possible and practical, consequently dramatically decreasing cost of errors and unintended consequences.