20211030 – Facing Reality

MAIN IDEA:

The author’s main idea is to call attention to the dismal condition of the American polity that is under severe stress due to racial tensions and identity politics. The author is afraid that the American creed, which he defines as a multiracial and mainly classless society, is falling apart. Therefore, he calls to action, rejuvenating and restoring this creed. Consequently, the author allocates the bulk of the book to demonstrate the real differences between races in IQ and crime rates with factual data and statistics. However, he points out that racial discrimination directed against Whites and Asians and designed to suppress their statistical advantages is not just unfair but dangerous. If Whites, who are the majority of the population, become another special interest group, the society in its current form could not survive.

DETAILS:

Introduction

The author defines current reality as the struggle for America’s soul, and he wrote this book to clarify two facts that people are afraid to look at:” The first is that American Whites, Blacks, Latinos, and Asians, as groups, have different means and distributions of cognitive ability. The second is that American Whites, Blacks, Latinos, and Asians, as groups, have different rates of violent crime. Allegations of systemic racism in policing, education, and the workplace cannot be assessed without dealing with the reality of group differences.”

Chapter One: The American Creed Imperiled

The author presents his understanding of the American creed as expressed in the Declaration of Independence that “All men are created equal” and then describes the recent American history of the successful civil rights movement. Then the author moves to describe developments of the XXI century that challenged this American creed. The key component of this development is identity politics, defined this way:” The core premise of identity politics is that individuals are inescapably defined by the groups into which they were born – principally (but not exclusively) by race and sex – and that this understanding must shape our politics.” The author also defines another component that he intends to defy: “…the premise that all groups are equal in the ways that shape economic, social, and political outcomes for groups and that therefore all differences in group outcomes are artificial and indefensible. That premise is factually wrong. Hence this book about race differences in cognitive ability and criminal behavior.”

Chapter Two: Multiracial America

This chapter begins with the description of multiracial America:

After describing the general racial breakdown of the population, the author discusses the racial geography of multiracial America. It includes the big cities which went from the white majority to the minority. The total population of big cities (500,000+) is 127 million people, or 39% of the population. Outside the big cities, the European percentage raises to 71%. The author also presents the color-coded map of racial distribution:

Chapter Three: Race Differences in Cognitive Ability

In this chapter, the author presents his position on the race’s average cognitive ability in the groupings. His contentions are:

- When Africans, Asians, Europeans, and Latins take tests that are related to cognitive ability, their group results have different means.

- Race differences between Africans and Europeans in cognitive test scores narrowed significantly during the 1970s and 1980s, but the narrowing stopped three decades ago.

- Scores on today’s most widely used standardized tests, whether they are tests of cognitive ability or academic achievement, pass the central test of fairness: They do not underpredict the performance of lower-scoring groups in the classroom or on the job.

The author also refers to several specific studies and explains how to interpret the results. For example, here is the table demonstrating group variance:

At the end of the chapter, the author discusses the meaningfulness of these findings.

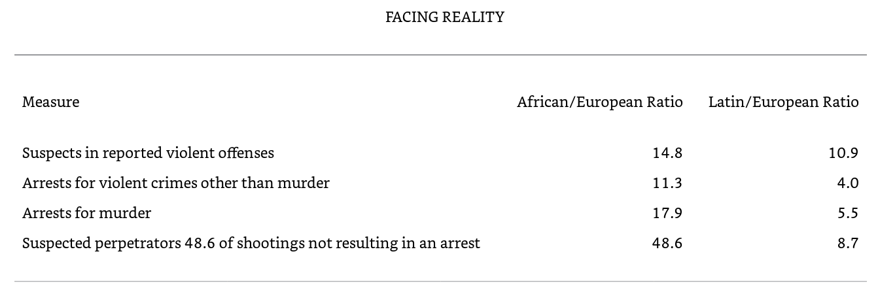

Chapter Four: Race Differences in Violent Crime In this chapter, the author uses a similar statistical approach to analyzing the racial group differences in criminal activities. The data mainly relate to 13 states of the USA and summarize in several tables that all demonstrate similar trends. Here is the summary of the findings.

Chapter Five: First-Order Effects of Race Differences in Cognitive Ability

In this chapter, the author enumerates the effects of cognitive deficiencies. For example, in the job market, these are the impacts:

- Measures of cognitive ability and job performance are always positively correlated.

- The size of the correlation goes up as the job becomes more cognitively complex.

- Even for low-skill occupations, job experience does not lead to convergence in performance among persons with different cognitive abilities.

- For intellectually demanding jobs, there is no point at which more cognitive ability doesn’t make a difference. Increases in IQ scores are statistically associated with increases in productivity at every level of cognitive ability.

For impacts on educational achievement, the author provides the statistical result of the admission tests to professional training.:

At the end of the chapter, the author presents the consequences of affirmative actions:

“The 2014–2018 American Community Survey found that Africans, at 13 percent of the population, accounted for only 3.6 percent of CEOs, 3.7 percent of physical scientists, 4.4 percent of civil engineers, 5.1 percent of physicians, and 5.2 percent of lawyers. Latin percentages in those prestigious occupations ranged from 5.3 to 7.6 percent, but Latins are almost 18 percent of the population, so their underrepresentation was nearly the same.

The picture flips when race differences in cognitive ability and job performance are taken into account. Africans and Latins get through the educational pipeline with preferential treatment in admissions to colleges and to professional programs. Their mean IQs in occupations across the range from unskilled to those requiring advanced degrees are substantially lower than the mean IQs for Europeans in the same occupations. Race differences in measures of on-the-job performance are commensurate with the differences in cognitive ability.

I think it is fair to conclude that the American job market is indeed racially biased. A detached observer might even call it systemic racism. The American job market systemically discriminates in favor of racial minorities other than Asians.”

Chapter Six: First-Order Effects of Race Differences in Crime

In this chapter, the author reviews the consequences of high crime levels of minority groups. The author looks at big cities and finds that many crimes and arrests occur in specific zip codes. He links it to the stunted economic activity: the result of high cost and even danger of doing business in the high crime areas. The author also reviews the multiple political interventions and government expenses, none of which produce sustainable improvement. Similarly, the high crime protected by massive grievances industry makes policing defensive when police officers are concerned more with protecting themselves than anything else. The author also discusses small-city and rural America, where crime is much lower and, interestingly enough, much less varies by race.

Chapter Seven: If We Don’t Face reality

The final chapter represents the author’s sum of all fears. He laments his previous neglect regarding identity politics as just a college student game and states his belief that it now presents an existential threat to America. His big fear is that the white majority respond to growing defamation and discrimination against it with its own identity politics. The author provides parallel to BLM movement and warns:” “The question asks itself: If a minority consisting of 13 percent of the population can generate as much political energy and solidarity as America’s Blacks have, what happens when a large proportion of the 60 percent of the population that is White begins to use the same playbook? I could spin out a variety of scenarios, but I don’t have confidence in any of them. I am certain of only two things.

First, the White backlash is occurring in the context of long-term erosion in the federal government’s legitimacy. Since 1958, the Gallup polling organization has periodically asked Americans how much they trust the federal government to do what is right. In 1958, 73 percent said “always” or “most of the time.” Trust hit its high point in 1964, when that figure stood at 77 percent. Then it began to fall. By 1980, only 27 percent trusted the government to do what is right. That percentage rebounded to the low 40s during the Reagan years, then fell to a new low, 19 percent, in 1994. It rebounded again, hitting a short-lived high of 54 percent just after 9/11. Then it plunged again, hitting another new low, 15 percent, in 2011. It has been in the 15–20 percent range ever since. A government that is distrusted by more than 80 percent of the citizens has a bipartisan legitimacy problem.”

In the end, the author calls:” The return to an embrace of the American creed must be a celebration of America’s original ideal of equality under the law.” He believes that it is possible if the supporters of the American creed on both sides of the political divide start expressing their support loudly and actively. They should also stop demonizing each other, express the belief that the people on the other side also love this country, and start looking for compromises.

MY TAKE ON IT:

I generally agree with the author that the balkanization of America currently underway could lead to tremendous pain and suffering. To me, the idea that non-elite whites would sheepishly agree to be second-class citizens and passively suffer all kinds of restrictions and humiliations to pay for sins of the past seems to be just plain unrealistic. However, I do not think that accurate restatement of racial groups deficiencies would help with this problem. Actually, I believe that elite whites who actively promote identity politics are not just well familiar with statistically lower IQ and high crime rates of blacks and Hispanics but count on it to help them stay in power. Nothing could be more threatening to some mid to upper-level bureaucrat or politician than some lower-middle-class high IQ kid striving to move up and push this bureaucrat out of the comfy place. Therefore, for such bureaucrats and politicians, the identity politics that would substitute this dangerous kid with a lower IQ but a racially correct alternative is just too great of an instrument to fend off this threat. The best way to correct this issue is to disregard statistical differences and demand an individual approach with double-blind selection for candidates to any preferred and competitive position. Anything else should be treated as open racism, regardless of whether it is anti-black, anti-white, or anti-Hispanic. The individuals at the higher levels of government, educational, or corporate hierarchies should be immediately fired and treated the same way afterward as sexual predators, so people would be alerted if they move in nearby areas.

20211023 – Escaping Paternalism

MAIN IDEA:

The main idea is to review behavioral economics at a very detailed level and demonstrate that its promoters’ claims are often excessive, often based on research isolated from reality, and greatly simplify rationality or lack thereof in human behavior. However, the overriding objective of this book is to provide viable intellectual tools for rejection of the attempts to limit individual freedom via the coercive intervention of bureaucrats and politicians into individual decision-making under the pretense of better knowledge of what people need than these people themselves.

DETAILS:

1 Introduction:

The Rise of the New Paternalism

The Old versus the New Paternalism

A Sampling from the Behavioral Paternalist Agenda: Sin Taxes, Default Enrollment in Savings Plans, Cooling-Off Periods, Risk Narratives, Graphic Images, Employee-Friendly Terms in Labor Contracts, Outright Bans;

A Gauntlet of Challenges

Caveats and Clarifications: Arguments versus Policies, Behavioral Arguments for Nonpaternalist Policies, Freedom and Autonomy;

People, Not Puppets

This introduction describes the new paternalism recently developed from behavioral economics. Authors suggest that it is different from old paternalism, which stated that elite experts better know what is best for people than people themselves. The new paternalism forfeits this claim and claims not to dictate but discover peoples’ wants. The new paternalists also claim to know how to make better decisions, and they want the power to nudge people to act correctly. The authors define the key objective of the book as:” presenting the conceptual and consequentialist case against behavioral paternalism. Inasmuch as the case for behavioral paternalism rests on its supposedly beneficial consequences, our response in most respects constitutes an immanent critique.”

2 What Is Rationality?

Explicit and Implicit Components of Purposeful Behavior

Rules as a Tool of Rationality

Bounded Rationality and the Limits of Models

The Functional Value of Biases and Errors

Positive, Normative, and Prescriptive

This chapter defines the new notion of inclusive rationality:” Inclusive rationality means purposeful behavior based on subjective preferences and beliefs, in the presence of both environmental and cognitive constraints. This notion of rationality preserves the core notion of purposefulness, and in that sense, it should seem familiar. But unlike other notions of rationality – many of which were invented for modeling purposes but have since taken on a life of their own – inclusive rationality does not dictate the normative structure of preferences and beliefs a priori. Instead, it allows a wide range of possibilities in terms of how real people select their goals, form and revise their beliefs, structure their decisions, and conceptualize the world. Their preferences and beliefs may be inchoate, incomplete, inconsistent, mutable, and dependent on context. Inclusive rationality can thus encompass choices and strategies that would not make sense under more restrictive notions of rationality.” The authors present specific features of inclusive rationality and discuss how it differs from formal rationality and irrationality. They also discuss conscious and unconscious components of purposeful human behavior, bounded rationality that limits human reasoning abilities, and resulting deficiencies in human actions in achieving the best available results. Finally, the authors provide the list of issues that pretty much invalidates behavior economists’ claim to be able to improve people lives by manipulating their behavior in the “correct” direction:

- They may assume, in accordance with ordinary conversational norms, that experimenters provide only information that is relevant to solving the problem – i.e., no irrelevant or “tricky” information. They do not immediately assume the experimenters are trying to fool them.

- They may resist the distinction between the validity of a syllogistic inference (e.g., “People with red hair are Martians, John has red hair, therefore John is a Martian”) and the truth of a conclusion itself (John is not a Martian). Normally, in everyday life, it is the truth that is more important.

- They may not assume that prior probabilities about something – such as the likelihood that someone has a disease – must be equal to the “base rates” from the population provided to them. Instead, their priors may be affected by their evaluation of the significance of the base rates to a particular problem in front of them – say, whether a specific person who chose to visit the doctor and chose to take a test has the disease. Treating priors in this way is fully consistent with the subjectivist Bayesian view that prior probabilities are subjective – a fact frequently ignored in the rush to deem subjects “irrational.”

- They may not agree with model-builders on the informational equivalence of different descriptions of a situation. Instead, they may infer implicit information or advice from how a problem is presented. For example, they may perceive an important difference between a stated probability of success equal to 0.7 and a stated probability of failure equal to 0.3. Perhaps the former conveys greater optimism, despite the formal mathematical equivalence of the two statements. Conversational norms and expectations do not always align with logic and probability theory. The former can be adaptive in the real world while the latter is adaptive on experimental tests. Which is more important?

- They may attach satisfaction or utility to things other than what the analysts expect. For instance, they may value an object more because it is theirs already. Or they may care about feelings of gain and loss experienced during the experiment, not just how much money they have when they leave the laboratory. Or they may gain satisfaction purely from having a particular belief, irrespective of its truth (“My wife is beautiful and my children are gifted”).

Finally, at the end of the chapter, the authors clearly state their position:” The simple fact that individuals do not behave in accordance with standard theories is not evidence of failure in this broader normative sense. It is certainly not evidence in favor of fixing their behavior. The norms of standard neoclassical rationality are not prescriptions for better behavior. Behavioral economists have unfortunately accepted the prescriptive relevance of the received theory even as they have rejected its predictive accuracy in a wide range of behavior. In this book, the authors are mainly concerned with the normative and prescriptive aspects of rationality. Therefore, their disagreement is with both standard and behavioral economics, given that both are wedded to the same prescriptive view of rationality.

3 Rationality for Puppets:

The Axioms of Preference Rationality

Neoclassical Rationality as the Behavioral Welfare Standard

The Origin of Neoclassical Rationality in Economic Theory

Rational Violations of “Rational Preference”: Preference Discovery, Preference Formation, Economizing on Cognitive and Noncognitive Effort, Preference Rotation, Illustrative Examples;

What About the Money Pump?

Description and Redescription

The Non-Sequitur of Resolving Preference Inconsistencies

Interpreting Behavioral Inconsistency

Authors’ Conclusions: “Behavioral paternalists rest their case on the evidence that normal people violate basic tenets of rationality. But what do they mean by rationality? It turns out behavioral economists use the same definition of rationality as their neoclassical counterparts. Neoclassical or “puppet” rationality rests on two axioms – completeness and transitivity – that together impose a form of consistency on the structure of people’s preferences. Other characteristics of neoclassical rationality, such as framing invariance and independence of irrelevant alternatives, derive from these more basic axioms. Although behavioral paternalists have rejected neoclassical rationality as a positive description of human behavior, they have nevertheless maintained it as a normative standard. In this chapter, we have argued that this was a mistake. The axiomatic definition of rationality was developed primarily, if not entirely, for positive (i.e., descriptive or explanatory) analysis. The axioms justified the use of utility functions, an important step along the path to proving propositions such as the existence of a competitive market equilibrium. They made economic models mathematically tractable, and they facilitated the generation of testable hypotheses. In short, they enabled the creation of simple, functional, and often quite useful puppets to populate economic models, thereby satisfying the needs of the model-builders. But however useful the neoclassical axioms may have been for positive purposes; they never had a strong normative justification. They may be violated in many reasonable ways. Normal people may be found in the process of discovering their preferences, or even the process of creating them. They may decide, consciously or otherwise, that the costs of completely rationalizing their preferences exceed the benefits of doing so, and so they allow their preferences to remain inconsistent. A variety of examples show that people’s preferences may be incomplete or intransitive for understandable reasons that do not obviously demand correction. Our inclusive notion of rationality allows for all of these deviations from the neoclassical structure. The simplistic axioms of puppet rationality cannot capture the breadth and variety of how real human beings evaluate options and make choices. Many of the problems discussed in this chapter are not new, but presenting them together here demonstrates that the normative case for puppet rationality is extraordinarily weak, at least outside of special cases. The neoclassical axioms of preference may have descriptive or explanatory value – or, given the work of behavioral economists, they may not. But to call them “rationality requirements” is normatively arbitrary. If we gave them another name – say, “structural assumptions” – they would still perform the function for which they were created without deceiving economists or the public into thinking that nonconforming behavior or preferences need to be “fixed.”

4 Preference Biases:

Intertemporal Trade-Offs and Time-Discounting Inconsistencies: Time: Objective and Subjective, Preference Reversal, Intransitive Intertemporal Choices, Do Nonstandard Intertemporal Decision-Makers Suffer?

Endowment Effects: Loss Aversion as a Cause of Endowment Effects, Status Quo Bias as a Cause of Endowment Effects; Mere Ownership as a Cause of Endowment Effects, Contrary Evidence;

Affective Forecasting: Impact Bias as Procedural Artifact? Cognitive Feedback: Attention and Learning;

Authors’ Conclusions: “In this chapter we have shown that the phenomena known as “preference biases” are far more complex than they are often portrayed to be. Sometimes more penetrating analysis shows that the evidence for their existence is weak. Other times they are (at least partially) artifacts of imprecise or misdirected questioning of subjects. And yet other times, evidence suggests they may function as adaptations to a broader set of behavioral and environmental factors than are normally considered. Even more importantly, the normative analysis of biases is often arbitrary. Biases are typically demonstrated by showing inconsistencies in preferences and then choosing one set as normative. But alleging inconsistencies does not in itself enable us to say which preferences are normative – particularly when other behavioral factors play a role in generating the behavior in question. For example, there is good evidence to suggest that both short- and long-run discount rates are “contaminated” and therefore neither has a clearly better claim to superiority. Or, as we’d rather say, neither is contaminated; they just are what they are. Agents do not typically exhibit pure neoclassical preferences. And this is not obviously a bad thing. In the real world, agents need not be worse off by their own lights when their behavior exhibits what outside observers would regard as bias.”

5 The Rationality of Beliefs:

The Functions of Beliefs and Learning: Optimistic Beliefs;

Rational Irrationality;

Rational Violations of Classical Logic: Logical Equivalence versus Informational Equivalence, Wason Selection Test: Confirmation Bias? Nonmonotonicity, Wason Selection Test as Maximizing Expected Information Gain;

The Conjunctive Effect:

Conversational Norms and the Maxim of Relevance, Interpretation of Intersecting Events as Mutually Exclusive, Inductive Confirmation of Hypotheses;

Bayes’ Rule, Base-Rate Neglect, and Belief Revision: Base Rates Are Not Necessarily Prior Probabilities, Changing Causal Structure and Base-Rate Instability, False-Alarm Rates and Hit Rates May Not Be Independent of Base Rates, Magnification of Errors in a Noisy World, Not All Base Rates Are Created Equal;

Availability Bias and Frequency Judgments: Pinning Down the Meaning of Availability, Diagnosticity and Availability,

Salience;

Overconfidence and Probability Judgments: How to Make Guesses on Trivia Questions, Degrees of Confidence versus Subjective Probabilities, Subjective Probabilities and Objective Frequencies, When Is the Implied Expectation Consistent with the Actual Frequency, When Is the Implied Expectation “Overconfident” but the Frequency Judgement Accurate? Coherence or Adapted Frameworks? The Data: Extreme Format Dependence, The Economics of Prediction: Trade-Offs

Authors’ Conclusions: “We have covered a wide range of cognitive operations and phenomena in this chapter – from the logical to the probabilistic. We have found that the literature on cognitive biases, vast though it is, tends to fail in one fundamental respect: recognizing the pragmatic and contextual nature of rational decision-making. The mistake that is constantly and consistently made is to equate rationality with an abstract system of thought unrelated to the purposes and plans of individuals in the environments in which they find themselves. In a related manner, the literature also fails to take into account the socially legitimate expectations of the participants in experiments that the researchers should not provide extraneous or misleading information. These are problems that go to the very heart of the “heuristics and biases” research program. Our perspective, by contrast, recognizes that beliefs serve a purpose – and that purpose is not always truth-tracking. Beliefs can direct attention and provide motivation. Beliefs can be a source of direct satisfaction. Even when beliefs perform a primarily truth-tracking function, there is no uniquely correct way to form and revise beliefs in real-world environments characterized by uncertainty and change. Most importantly, people in realistic contexts do not think like strict logicians and probability theorists – nor should they. While economists and psychologists are greatly concerned with the deductive consistency of beliefs, regular people need not share that concern. People acquire tools for different types of challenge in the wild, and they should not be expected to abandon all such tools when they enter the laboratory. In the study of beliefs, just as in the study of preferences, behavioral researchers have made the mistake of conflating their models with reality – and, when reality fails to conform to the model, judging it deficient.”

6 Deficient Foundations for Behavioral Policymaking:

Context-Specificity of Psychological Findings: Contextuality of the Effect of Moods and Emotions, Contextuality of Loss Aversion and Reference Points, Context-Specificity in Context

Generalizing Quantitative Results from the Lab to the Real World: Stated Choice and Revealed Choice, Quantitative Generalizability, Reproducibility, The Population of Relevance;

Failure to Account Adequately for Incentives: Incentives: Clearing Away the Confounds, Incentive Effects, Learning and Experience, Learning and Errors, Policy Implications of Learning and Incentives

Small-Group Debiasing: Small Groups and Task Performance (Conjunctive Effect. Wason Selection Test. First-Order Stochastic Dominance. Probability Assessment. Probability Matching.) Small Groups and Preference Biases (Myopic Loss Aversion. Present Bias.)

Self-Regulation: Context-Dependence of Self-Regulation, Automaticity of Much Self-Regulation, Biases as Self-Regulation, Self-Regulatory Processes Mistaken for Agent Naivete, Significance of Underestimating the Extent of Self-Regulation, Self-Regulation and the Opportunity Costs of Executive Function

Authors’ Conclusions: “In a survey of the literature on the use of technical research by policy actors, Bogenschneider and Corbett (2010) identify twelve criteria by which the usefulness of research is evaluated for policy purposes. Among those, three stand out as having critical significance for the behavioral and cognitive research we have discussed in this chapter. They are:

- Definitiveness: Results are clear.

- Generalizability: Results are applicable to the jurisdictions or populations of interest to the policymaker.

- Policy Implications: The links between results and policy are clear.

Unfortunately for behavioral paternalism, the research displays serious deficiencies with regard to these criteria. First, it is hard to claim that the results are clear-cut. When incentives, learning, group debiasing, and self-regulation have not been adequately assessed, it is not clear which results we can confidently export to the world of public policy. Second, generalizability is uncertain because the results are highly contextual, the rate of reproducibility is unknown and possibly quite low, and the populations studied do not necessarily resemble those targeted by policy. Finally, the link between results and policy recommendations is far from clear. What appear as biases may in specific contexts actually be debiasing techniques. And the failure of quantitative results to generalize opens the real possibility of overcompensating for perceived biases. Recall our introductory remarks that the claims in this chapter constitute immanent criticism. Even if we agreed that the standard rationality norms of neoclassical and behavioral economics provided an appropriate basis for prescribing public policy, the tools that real people use to achieve their goals and to shape their own behavior are multifarious and resistant to description by simple models. To craft policies that help agents reduce their biases, we still need reliable scientific knowledge about how, when, and where those biases operate, their strength in real-life settings, the extent to which agents learn about and correct biases on their own, and so on. These questions are still largely unanswered, although we can hope that future research will begin to fill in the blank spaces.”

7 Knowledge Problems in Paternalist Policymaking:

A Typology of Knowledge Requirements: Knowledge of True Preferences, Knowledge of the Extent of Bias, Knowledge of Self-Debiasing and Small-Group Debiasing, Knowledge of Dynamic Impacts on Self-Regulation, Knowledge of Counteracting Behaviors, Knowledge of Bias Interactions, Knowledge of Population Heterogeneity;

The Empirical Search for True Preferences: Augmented Revelatory Frame Approach, Unified Behavioral Revealed Preference

The Practically Insurmountable Knowledge Problem;

Authors’ Conclusions: “Behavioral economists overreach when they confidently attribute the increase in 401(k) participation after automatic enrollment to countering biases by creating sticky defaults that people passively accept. Much of the increase in participation is likely attributable to improved information and the recommendation effect of the new default. Biases such as anchoring, limited salient options, and loss aversion do not seem as plausible in this context. While present bias may be operative with regard to decision-making costs, its importance is diminished as decision complexity is reduced. Since automatic enrollment improves the information position of agents, it reduces the complexity of decision-making. Thus, even if employees overweight initial decision costs due to present bias, the impact of this bias is substantially reduced due to the fall in decision costs. Policy-oriented behavioralists are also mistaken in suggesting that the welfare effects of automatic enrollment are unambiguously positive to all groups. There are heterogeneous effects, especially in the class of former optimizers and, in general, when the knowledge of the planners is poor. There are distributional effects within the category of retirement benefits. In addition, there are also a number of substantial unintended consequences, including increased consumer debt and early withdrawal of retirement savings. These have been ignored in previous research because of the narrow focus on 401(k) activity. Behavioralists are also likely mistaken in claiming that the greater use of automatic enrollment observed in recent years is a consequence of private paternalism. The appearance of such may be the result of an excessively loose or vague concept of paternalism – a topic we will address directly in Chapter 10. Employers in the United States are not currently required to provide an automatic-enrollment default. They are still maximizing profits and engaging in mutually advantageous bargains with their employees. How likely is it that they have suddenly become benevolent paternalists under the influence of behavioral economics? Finally, we have to wonder why so much attention has been focused on automatic enrollment versus other options. Given the evidence that information and recommendation effects play a significant role in explaining default stickiness, why not advocate explicitly providing the information and recommendations in question?68 Such messages could be provided in the presence of either the traditional default or active choice. Changing the default rule seems a very indirect way of conveying messages that could be provided directly, especially since implicit messages can easily be misunderstood. We surmise that the focus on automatic enrollment derives from the presence of other (or additional) motives – specifically, the desire to increase retirement savings irrespective of whether that is what any particular individual truly wants”.

8 The Political Economy of Paternalist Policymaking

Rational and Irrational Mechanisms of Government Failure

Rational Ignorance

Concentrated Benefits, Diffuse Costs

Self-Interested Regulators

Bootleggers and Baptists

Public choice Paternalism in Practice: The Definition of Overweightness and Obesity, Regulation of Cigarettes and Vaping, USDA Nutritional Guidelines

Public Sector Irrationality

Types of Bias that Affect Policymaking: Action Bias, Overconfidence and the Illusion of Explanatory Depth, Confirmation Bias, Availability and Salience Effects, Affect and Prototype Heuristics, Present Bias and Hyperbolic Discounting

Authors’ Conclusions: Even if policymakers (including voters) were perfectly rational, there would be good reason to doubt that democratic government would generate well-designed paternalist policies. The diffusion of responsibility and accountability inherent in our form of government creates poor incentives for people to become well informed and to demand policies that genuinely track the public interest. Instead, legislators and bureaucrats will tend to promote laws and regulations that garner the support of highly motivated parties, including moralists and activists who want to promote values that others may not share, experts and academics who wish to see their research make an impact, and special-interest groups that stand to benefit financially from paternalistic laws. If policymakers are subject to the same cognitive biases that behavioral economists attribute to regular people, we should expect the policymaking process to be even worse. Such biases are more worrisome in the public sector than the private sector, because the public sector offers far worse incentives for people to curb their irrational tendencies and numerous opportunities to indulge pleasing beliefs and prejudices at low cost. Furthermore, poor decisions in the public sector almost by definition affect large numbers of people who have little or no input into them; in other words, government policy is rife with externalities. As a result, we should expect paternalist (and other) policymaking to suffer from the effects of action bias, overconfidence, the illusion of explanatory depth, confirmation bias, availability bias, and other cognitive limitations. Although we have considered both rational and irrational contributors to government failure separately, it’s worth taking a moment to consider how they interact. Behavioral economics indicates that certain types of argument will be more likely to succeed in the political sphere: those that emphasize the urgent need for taking action; those that downplay complexity and emphasize simple solutions; those that flatter people’s current beliefs and attitudes; those that rely on easily recalled and vivid illustrations of alleged problems; and those that emphasize the benevolent goals of the policies in question. Given these tendencies, we should expect the highly motivated parties mentioned earlier to exploit them to advance their agendas. Activists, academics, experts, and industry lobbyists have strong rational incentives to craft their policy proposals so as to maximize their appeal to irrational voters and legislators.

9 Slippery Slopes in Paternalist Policymaking

The Logic of Slippery Slopes

Gradients and Vagueness in Behavioral Paternalism: How Behavioral Paternalism Creates New Gradients, How Behavioral Paternalism Exploits Existing Gradients;

Slippery Slopes with Rational Policymakers: Altered Incentives Slopes, Authority and Simplification Slopes, Expanding Justification Slopes, Application to Smoking Bans, On Experts versus Ordinary People;

Slippery Slopes with Cognitively Biased Policymakers: Action Bias, Overconfidence, and Confirmation, Present Bias and Hyperbolic Discounting,

Availability and Salience, Framing and Extremeness Aversion, Affect and Prototype Heuristics

The Paternalism-Generating Framework

Rejoinders to Behavioral Paternalist Responses

Authors’ Conclusions: “Slippery-slope arguments are often treated dismissively, sometimes even consigned to lists of logical fallacies as a form of spurious reasoning. Without doubt, some writers do deploy slippery-slope arguments in a casual and imprecise way by simply asserting that seemingly attractive policy A will lead to clearly awful policy B. But this error does not mean all slippery-slope arguments are invalid. Rather, it means that we should pay attention to the specific processes – often probabilistic rather than deterministic – that connect one policy to another, as we have sought to do in this chapter. The slippery slope is a broad category, and many different mechanisms and processes fall under its umbrella. As such, it can be difficult to describe all slippery slopes in summary form. Nevertheless, certain features characterize many, though not all, types of slope. In particular, slopes tend to occur in the presence of vague and ill-defined concepts – what we have called gradients. Consequently, the same features of behavioral paternalism that are problematic on a conceptual level also raise concerns on a pragmatic level. In the earlier chapters of this book, we argued that the theoretical and empirical foundation of behavioral paternalism is fundamentally vague. It relies on distinctions that often fail to hold up under scrutiny, and that in any case cannot be reliably identified in practice. Policies based on such unstable moorings are almost bound to drift from their original justifications, because the justifications were weak and imprecise to begin with. Another common feature of slippery slopes is the presence of multiple and diffuse decision-makers, many lacking in accountability for outcomes. When accountability is lacking due to diffuse responsibility, delayed consequences, and unclear objectives, decision-makers will typically display both rational ignorance and rational irrationality. Whatever cognitive biases are present in the private sector will tend to be magnified in the public sector, thereby creating the room necessary for the gradual drift of policies away from their initial purposes as well as the purposeful movement of policy under the influence of moralists and rent-seekers. If behavioral paternalists genuinely care about personal autonomy, as some claim, then they ought to take slippery-slope concerns more seriously than they have thus far. And if behavioral paternalists care about the implementation of thoughtful and well-designed policies, as virtually all of them claim, then they should worry about how slope processes could warp their nuanced justifications and well-intentioned plans. To ignore the risk of slippery slopes is to commit an error that behavioral paternalists often caution against: focusing on present gains at the expense of future (and uncertain) losses. To repeat: the slope risk must be counted among the costs of the initial policy intervention. What, then, can be done to avoid, or more realistically to minimize, the danger of paternalist slopes? We have suggested some of the answers in this chapter. They involve, among other things, rejecting the paternalism-generating framework suggested by behaviorally minded thinkers, and adopting instead a paternalism-resisting framework. Such a framework would emphasize the distinction between voluntary and coercive action, as well as the distinction between private and state action.”

10 Common Threads, Escape Routes, and Paths Forward

Common Threads: The Complexity of Inclusive Rationality, The Indeterminacy of Welfare Criteria, The Role of Incentives and Learning, The Rush to Policy

Escape Routes: Revert to Objective-Welfare Paternalism, Appeal to Obviousness, Shift the Burden of Proof, Loosen the Definition of Paternalism, Rely on the “Libertarian Condition”, Invoke the Inevitability of Choice Architecture, Focus on the Irrational Subset of the Population, Rely on Extreme Cases, Treat Behavioral Paternalism as a Toolbox, Invoke Fiscal Externalities

Recommendations: Replace Puppet Rationality with Inclusive Rationality, Reject the Paternalism-Generating Framework, Have Reasonable Expectations of Policymakers, Maintain Important Distinctions

A Better Path Forward: The authors begin discussion here with the Harm Principle: “the idea that we are justified in coercing people only for the purpose of preventing harm to others “. The authors stated their believe that the behavioral paternalists reject this principle, sometimes explicitly demanding coercion use for “the better good” but sometimes implicitly by trying create conditions when people forced to do what is “good for them”. The author also stated their position:” we believe others may be making mistakes that harm their well-being, we are free to tell them so. We may even beg and plead if the situation warrants. The advantage of this approach is that it offers potentially useful information and perspective while still respecting people’s right to choose for themselves. After all, they probably have information and perspective on their own lives that outsiders lack. “

The authors also discuss the promotion of behavioral economics as a form of self-help, which they do not mind: “Behavioral economists and psychologists have produced a great body of insights on how human beings make decisions. While many of these insights are not as solid as we’ve been led to believe, they have nevertheless advanced our knowledge of the human mind. Our exploration of behavioral paternalism has forced us to question ideas and concepts that we once thought unassailable. We have, among other things, become more acutely aware of the failings of the neoclassical model of preferences and beliefs – which in turn drove us toward the notion of inclusive rationality that we have presented in this book. Therefore, we should not be understood as rejecting the whole of behavioral economics.” What they do mind are attempts to use it as tools of coercive policymaking: “It is jarring, to say the least, to see social scientists pointing out the errors of private individuals – and then failing to consider that social scientists and policymakers are also subject to error. It is frustrating to see behavioral researchers demonstrating the complexity of real decision-making processes – and then ignoring that complexity when recommending regulatory corrections of those very processes. It is simply baffling to see behavioral economists showing how real behavior deviates from neoclassical norms – and then insisting that behavior must conform to those norms or else be judged deficient.”

In the end, the authors reject entirely the behavior economists’ attitude: “…approach humanity from a position of presumed superiority, like puppet masters correcting the behavior of errant puppets.” Instead, the authors insist on:” approach them as fellow human beings doing the best they can, trying to improve their own choices, and offering friendly advice on how others might do the same.”

In short, the experts’ advice should remain advice, not a coercive policy.

MY TAKE ON IT:

I greatly appreciate the authors’ effort in producing such a detailed and effective review of behavioral economics and the attempts of its application to policymaking. It is clearly a critical part of the contemporary clash of ideologies. On one side is the ideology of freedom when people do what they want if it does not harm anybody. On the other side is the ideology of the “better” people making decisions for everybody. It is interesting how people transformed the latter ideology throughout time: from God-appointed kings and aristocracy to all-knowing “scientific” socialist and communists, to “scientific” experts wielding not theoretical works of Marks, but experimental research of behavioral economics. As far as I am concerned, I do not want anybody making decisions for me for the simple reason that whatever is the decision, I’ll pay the cost. This book also reasonably demonstrated that the scientific foundation of behavioral economics is quite shaky, so the quality of decisions would be poor. I hope that the currently growing wave of rejection to the rule of “betters” would get solid scientific backing from this book and other works like that.

20211016 – Unsettled

MAIN IDEA:

The main idea is to present the vast amount of actual data about climate change to help people understand the problems and their scales. The author makes the point that climate change is real, but its consequences are greatly exaggerated. Unfortunately, elites’ global political and financial interests drive this exaggeration to the extreme with the use of unreliable models, massive propaganda in the media, and corruption of science. The author also presents potential solutions and a set of requirements for them.

DETAILS:

Introduction

The introduction begins with the facts from US assessment that remain mainly unknown because they contradict the prevailing propaganda narrative:

- Humans have had no detectable impact on hurricanes over the past century.

- Greenland’s ice sheet isn’t shrinking any more rapidly today than it was eighty years ago.

- The net economic impact of human-induced climate change will be minimal through at least the end of this century.

The author then presents his credentials as a scientist and administrator with enough clout to convene a scientific workshop to assess the condition of climate science. Here is what the author discovered:

- Humans exert a growing, but physically small, warming influence on the climate. The deficiencies of climate data challenge our ability to untangle the response to human influences from poorly understood natural changes.

- The results from the multitude of climate models disagree with, or even contradict, each other and many kinds of observations. A vague “expert judgment” was sometimes applied to adjust model results and obfuscate shortcomings.

- Government and UN press releases and summaries do not accurately reflect the reports themselves. There was a consensus at the meeting on some important issues, but not at all the strong consensus the media promulgates. Distinguished climate experts (including report authors themselves) are embarrassed by some media portrayals of the science. This was somewhat shocking.

- In short, the science is insufficient to make useful projections about how the climate will change over the coming decades, much less what effect our actions will have on it.

Because the author is a natural and honest scientist and despite being a lifelong Democrat, he felt compelled to write this book and provide accurate information about the current condition of climate science, which is very different from the media’s portrayal.

Part l: The Science

Part I clarifies how the climate has changed, how it will change in the future, and the impact of those changes. It also offers some basics about the official assessment reports that we look to for answers to those questions.

Chapter 1. What We Know About Warming

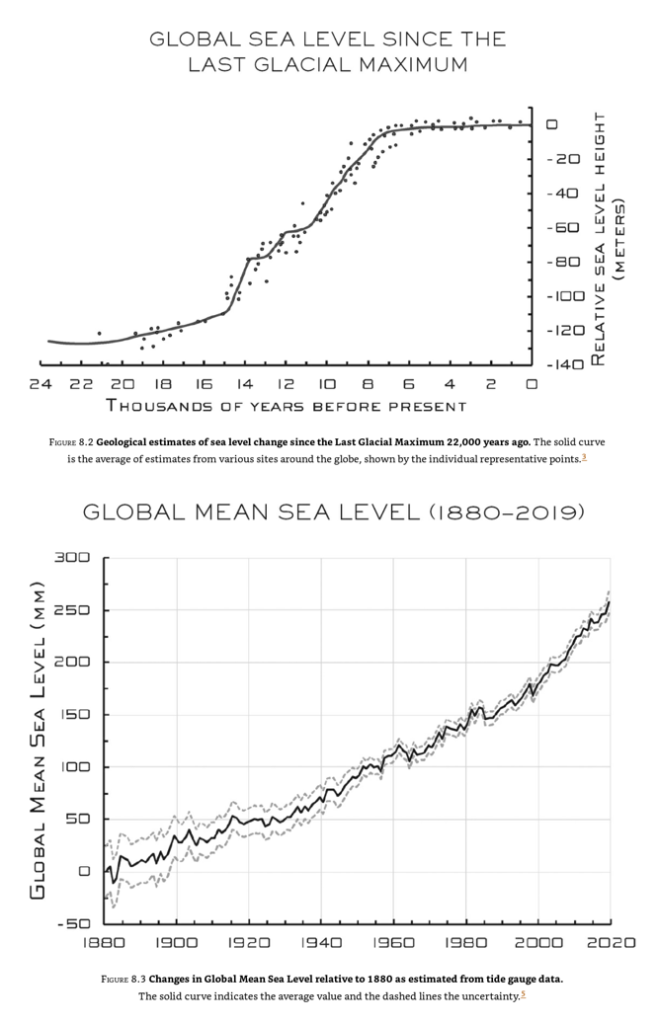

The chapter explains both the importance and challenges of obtaining quality observations of the earth’s climate (which is not the same as its weather) over many decades; it also reviews some of the indications of a warming globe and puts them in a geological context. This chapter provides information about trends in climate via multiple graphs and pictures. Generally, it demonstrates some warming, but it is not catastrophic and even practically insignificant at the long-term scale. Here are the essential graphs:

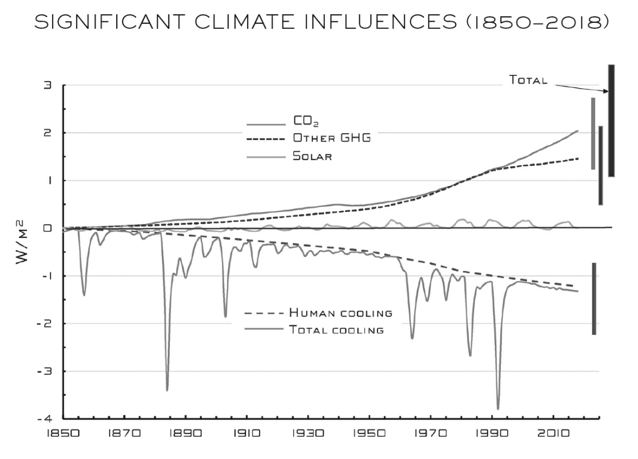

Chapter 2. Humble Human Influences

Chapter 2 then turns to how the earth’s temperature arises in the first place—from a delicate balance between warming sunlight and cooling heat radiation. We’ll see that this balance is disturbed by both human and natural influences, with greenhouse gases playing an important role. Because the climate is very sensitive, we need an accurate and precise understanding of those influences and how they’ve changed over time.

This chapter demonstrates the complexity of factors impacting climate, some of them causing the warming and some cooling:

Chapter 3. Emissions Explained and Extrapolated

The most important human influence on the climate is the growing concentration of carbon dioxide (CO2) in the atmosphere, largely due to the burning of fossil fuels. This is the focus of Chapter 3—particularly, how the connection between CO2 emissions and concentration diminishes the prospect of even stabilizing growing human influences. Here is the graph of greenhouse emissions growth:

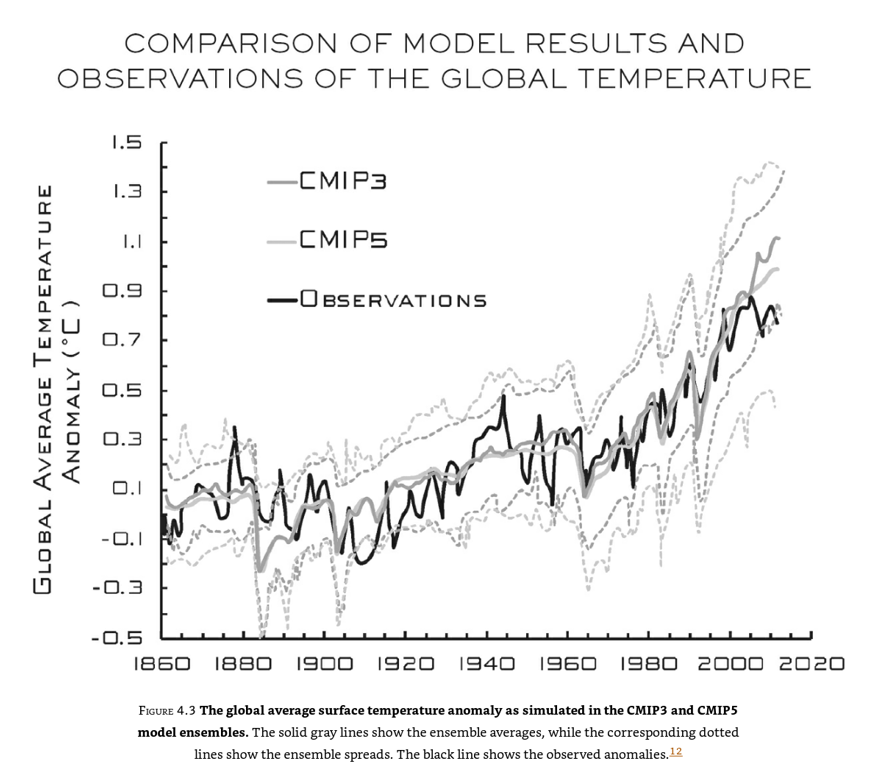

Chapter 4. Many Muddled Models

Computer models of how the climate responds to human and natural influences are the subject of Chapter 4. Drawing upon the author’s half-century involvement with scientific computing and the authorship of a pioneering text on that subject, he shows how they work, what they tell us, and some of their deficiencies. These dozens of sophisticated models are what scientists use to make their projections. What the media cites in their coverage—alas, they give results that differ significantly not only from each other but from observations (that is, they’re right in a few ways, but wrong in many others). In fact, the results have become more divergent with each generation of models. In other words, as our models have become more elaborate, their descriptions of the future have become less certain. In other words, contemporary models are far from being scientifically sound tools because they too much rely on assumptions and, most important, have little predictable power:

At the end of the chapter, the author concludes: “The uncertainties in modeling of both climate change and the consequences of future greenhouse gas emissions make it impossible today to provide reliable, quantitative statements about relative risks and consequences and benefits of rising greenhouse gases to the Earth system as a whole, let alone to specific regions of the planet.”

Chapter 5. Hyping the Heat

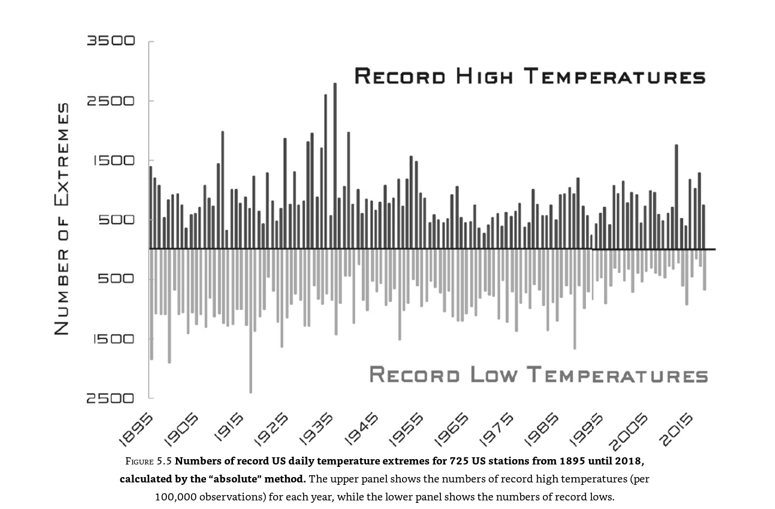

Chapter 5 is the first of five chapters dealing with contradictions between the science and the prevailing notion that “humans have already broken the climate,” exploring areas where the facts and popular perception are at odds (and probing the source of those discrepancies). This chapter focuses on record high temperatures in the US—they’re no more common today than they were in 1900, yet you wouldn’t know that from the misrepresentations of an allegedly authoritative assessment report. The chapter discusses the regularly occurring hype about temperature records and provides data demonstrating that it is not justified:

He concludes:” There have been some changes in temperature extremes across the contiguous United States. The annual number of high temperature records set shows no significant trend over the past century nor over the past forty years, but the annual number of record cold nights has declined since 1895, somewhat more rapidly in the past thirty years.”

Chapter 6. Tempest Terrors

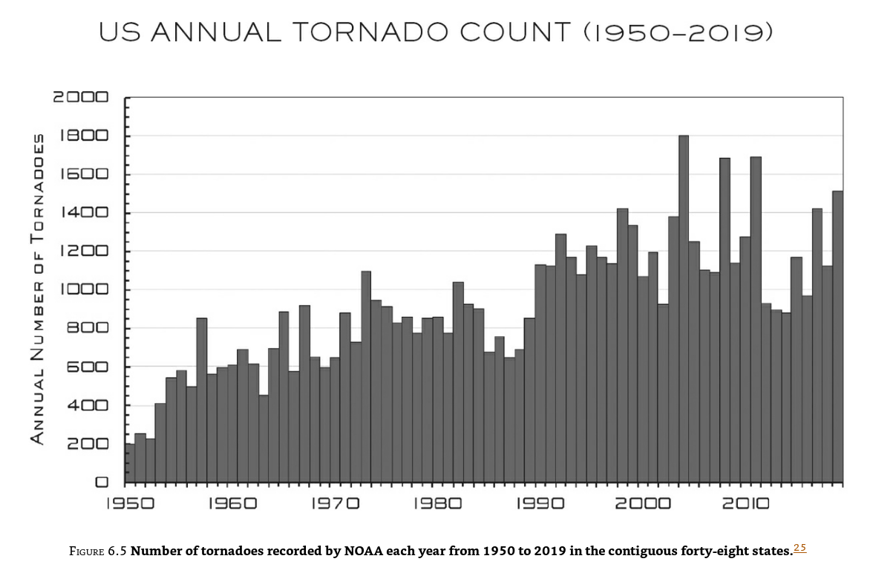

Chapter 6 likewise explains why experts conclude that human influences haven’t caused any observable changes in hurricanes, and how assessment reports obscure or distort that finding. Once again, the author demonstrates that there is some increase, but not that significant:

Chapter 7. Precipitation Perils—From Floods to Fires

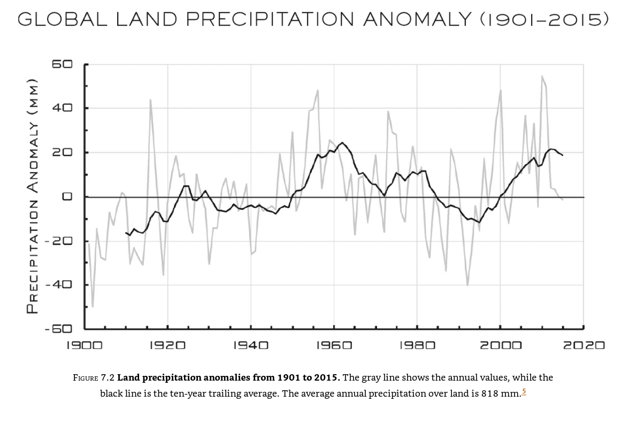

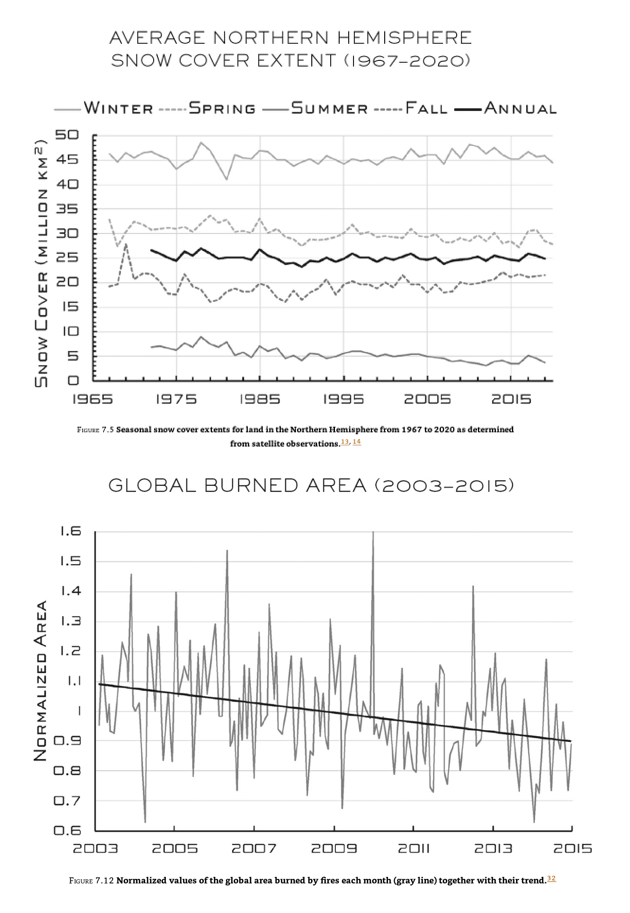

In Chapter 7, the author describes the modest changes seen in precipitation and related phenomena over the past century, discuss their significance, and highlight some points likely to surprise anyone who follows the news—for instance, that the global area burned by fires each year has declined by 25 percent since observations began in 1998. Here are the data:

Chapter 8. Sea Level Scares

Chapter 8 offers a levelheaded look at sea levels, which have been rising over the past many millennia. We’ll untangle what we really know about human influences on the current rate of rise (about one foot per century) and explain why it’s very hard to believe that surging seas will drown the coasts anytime soon. Similarly, to other discussed parameters, sea level is rising but not that significantly and not out of historical patterns:

Chapter 9. Apocalypses That Ain’t

Chapter 9 covers a trio of oft-cited climate-change impacts (fatalities, famine, and economic ruin), predictions of which are belied by the historical record and assessment report projections, even if it’s hard to discern this when reading the reports themselves. Nevertheless, for each of these, the author demonstrates the triviality of the impact, even for worst-case scenarios. Moreover, the actual trend in death rates is going down:

Chapter 10. Who Broke “The Science” and Why

Chapter 10 takes up the question of “Who broke it?”—why the science has been communicated so poorly to decision makers and the public. The author describes how overwrought portrayals of a “climate crisis” serve the interests of diverse players, including environmental activists, the media, politicians, scientists, and scientific institutions.

Chapter 11. Fixing the Broken Science

Chapter 11 closes out Part I by describing how we might improve communication and understanding of climate science, including adversarial (“Red Team”) reviews of the assessment reports, best practices for media coverage, and what non-experts can do to be better informed and more critical consumers of all science media—but especially about the climate. Here the author provides a list of the symptoms of science manipulation:

- Anyone referring to a scientist with the pejoratives “denier” or “alarmist” is engaging in politics or propaganda.

- Any appeal to the alleged “97 percent consensus” among scientists is another red flag.

- Confusing weather and climate is another danger sign.

- Omitting numbers is also a red flag.

- Yet another common tactic is quoting alarming quantities without context.

- Non-expert discussions of climate science also often confuse the climate that has been (observations) with the climate that could be (model projections under various scenarios).

Part II: The Response

Part II begins its discussion of the response story by drawing a distinction between what society could do, what it should do, and what it will do in response to a changing climate—three very different issues often conflated, even by experts. The author also provides context for society’s response:

- Keeping human influences on the climate below levels deemed prudent by the UN and many governments would require that global carbon dioxide emissions, which have been rising for decades, vanish sometime in the latter half of this century.

- Emissions reductions would have to take place in the face of strongly growing energy demand driven by demographics and development, the dominance of fossil fuels, and the current drawbacks of low-emissions technologies.

- These barriers, combined with the uncertainty and vague nature of future climate impacts, mean that the most likely societal response will be to adapt to a changing climate, and that adaptation will very likely be effective.

Chapter 12. The Chimera of Carbon-Free

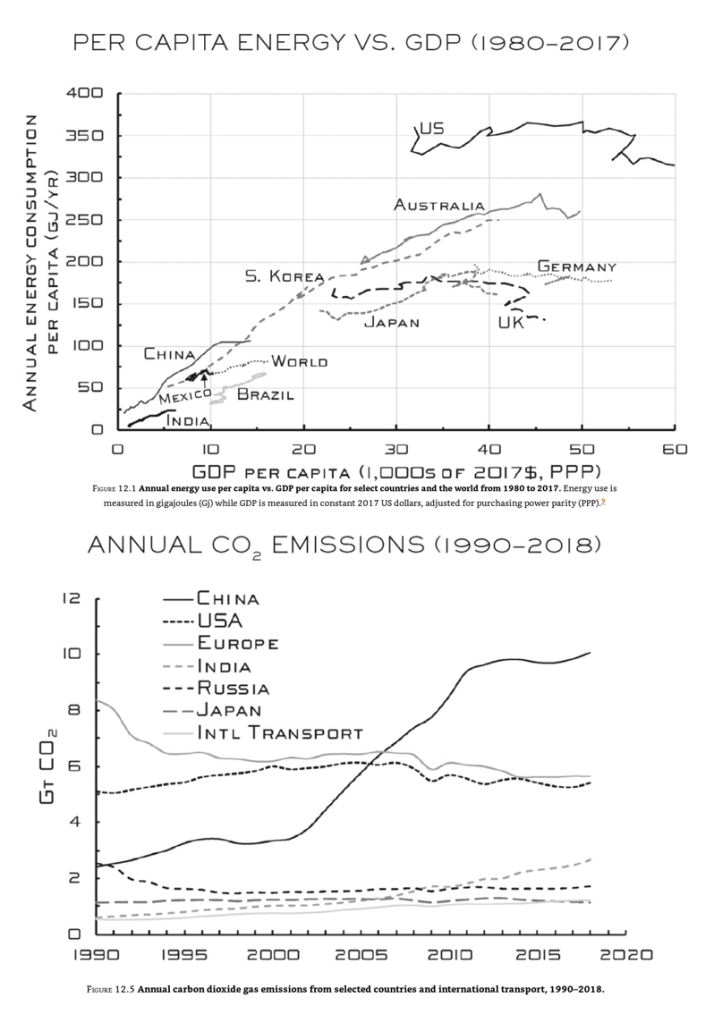

Chapter 12 illuminates the issue by discussing the formidable challenges in meaningfully reducing human influences on the climate, including the lack of progress toward the goals of the Paris Agreement. Here author reviews impact of different countries on the global emissions and how it changes over time. Two graphs represent this process:

Chapter 13. Could the US Catch the Chimera?

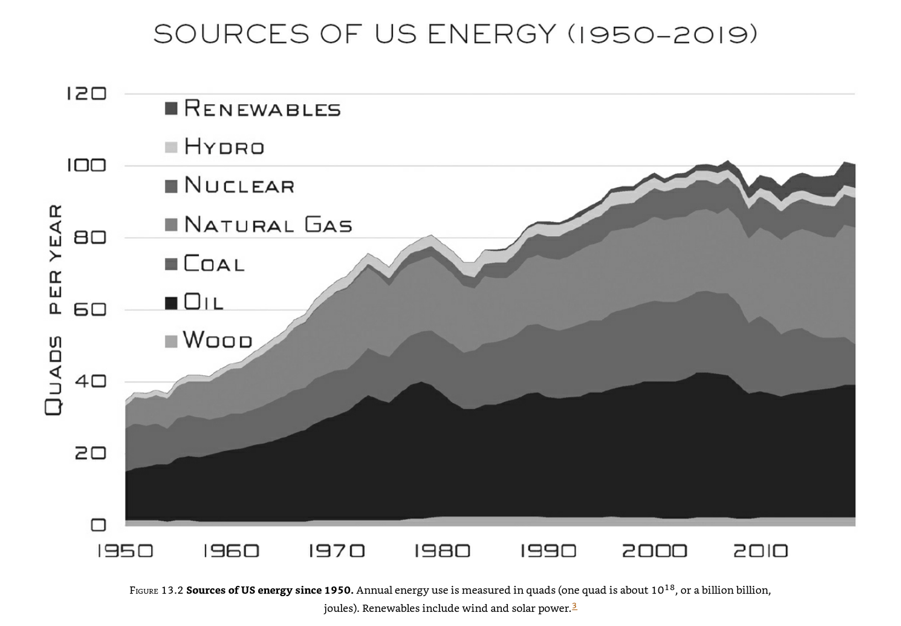

Chapter 13 sheds some light on the could issue by discussing the tremendous changes it would take to create a “zero-carbon” energy system in the US. Here is the illustration of the challenge:

The author discusses in details policy features required to decrease emissions in the USA.

Chapter 14. Plans B

Chapter 14 completes the response story with a discussion of “Plan B” strategies that allow the world to respond to a climate changing from either human or natural causes—adaptation, which will happen, and geoengineering, which could be deployed in extremis. Here are the key points that the author makes about adaptation:

- Adaptation is agnostic. Humans have been successfully adapting to changes in climate for millennia, and for most of that time, they did so without the foggiest notion of what (besides the vengeful gods) might be causing them. Thus, while the information we have now will help guide adaptation strategies, society can adapt to climate changes caused by natural phenomena or by human influences.

- Adaptation is proportional. Modest initial measures can be bolstered as and if the climate changes more.

- Adaptation is local. Adaptation is naturally tailored to the different needs and priorities of different populations and locations. This also makes it more politically feasible. Spending for the “here and now” (e.g., flood control for a local river) is far more palatable than spending to counter a vague and uncertain threat thousands of miles and two generations away. Further, local adaptation does not require the global consensus, commitment, and coordination that have proved so far elusive in mitigation efforts.

- Adaptation is autonomous. It is what societies do, and have been doing, since humanity first formed them—the Dutch, for example, have been building and improving dikes for centuries to claim land from the North Sea. Adaptation will happen on its own, whether we plan for it or not.

- Adaptation is effective. Societies have thrived in environments ranging from the Arctic to the Tropics. Adapting to a changing climate always acts to reduce net impacts from what they would be otherwise—after all, we wouldn’t change society to make things worse!

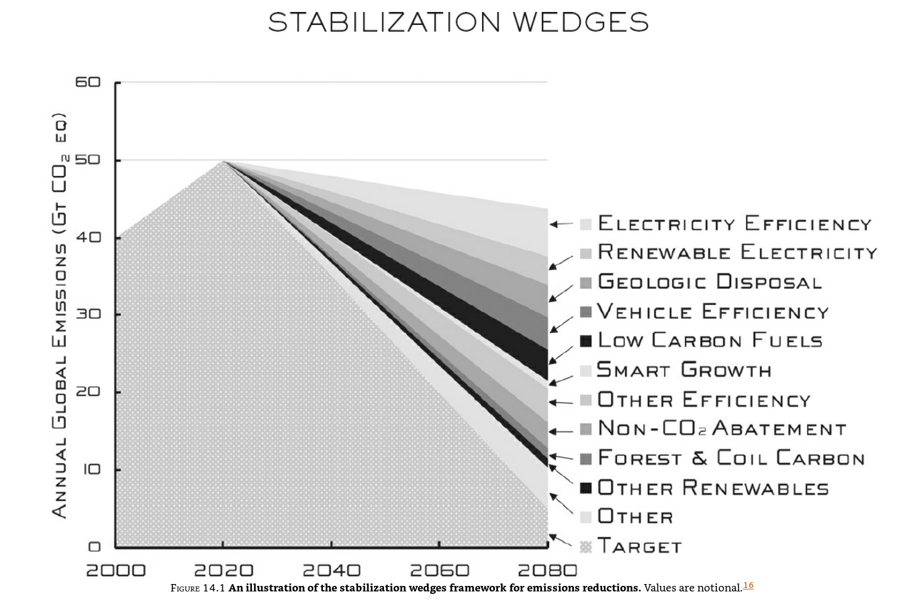

The author provides a very nice and information-intensive graph for future handling of emissions:

Closing Thoughts

In the end, the author discusses the reasons for writing this book, its descriptive rather than prescriptive character, and his belief that climate science needs improvement. He also suggests increase research into possible measures in case of unexpected climate emergencies such as geoengineering.

MY TAKE ON IT:

I like this book a lot because of its no-nonsense approach and the wealth of data presented in an easily digestible format. I also believe that humans impact the climate, and so do ants, chickens, volcanos, asteroids, and many more factors, either living or not. However, about the issue of the scale of such impact, I believe it is moderate. It creates no real danger to existence and prosperity of humanity unless the excretable part of this humanity – the global elite succeed in imposing unreasonable restriction on energy consumption and life for everybody else. Nevertheless, I am optimistic and believe that when people start feeling this impact on their wellbeing, they will respond; hopefully, they do it peacefully and use the democratic process to bring power crazies to the heel.

20211009 – Noise

MAIN IDEA:

The main idea is to demonstrate that errors in judgment happen all the time, and it is not a random occurrence. It is also to present the complex character of these mistakes as a combination of bias and noise, eventually recommending tools for managing this issue and maintain strict decision hygiene.

DETAILS:

Introduction: Two Kinds of Error

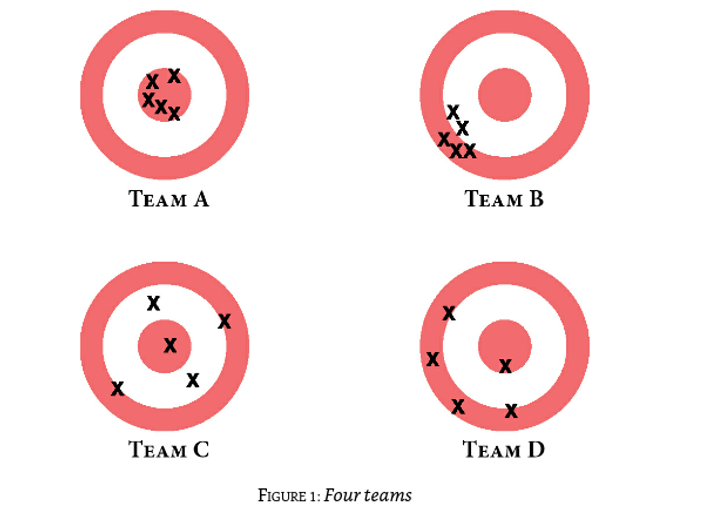

The introduction presents the book’s central theme: handling human errors, and describes two types of such errors: noise and bias. It also shows graphic representation with A on target, B – noisy, C – biased, and D – a mix of noise and bias.

Part l: Finding Noise

This part explores the difference between noise and bias, showing that public and private organizations can be noisy. It reviews two areas: sentencing (public sector) and insurance (private sector).

1. Crime and Noisy Punishment

This chapter presents the result of various research projects that convincingly demonstrate judge decisions depend on many irrelevant factors such as lunchtime, weather, and whatnot. It discusses Marvin Frankel’s organization “The Lawyers’ Committee for Human Rights” and its legislative achievement in establishing sentencing guidelines. Here are data from the study of results:” expected difference in sentence length between judges was 17%, or 4.9 months, in 1986 and 1987. That number fell to 11%, or 3.9 months, between 1988 and 1993.” In 2005 congress changed guidelines from mandatory to advisory, and variance between sentences by different judges nearly doubled.

2. A Noisy System

This chapter discusses noise in the insurance business. First, it describes the result of the noise audit in the insurance company that discovered 55% variance in underwriters’ premium estimates, even if executives’ expectations were around 10%. It then analyses how this could happen and concludes that it resulted from the illusion of agreement. The further discussion includes psychological processes that lead to this, costs of high noise levels, and the need for regular noise estimates and measures to decrease it.

3. Singular Decisions

This chapter discusses singular decisions vs. recurrent decisions and concludes that these are also quite noisy. The main point here is singular decisions are the same as recurring decisions made only once, so people should apply the same noise-reducing technics in both cases.

Part II: Your Mind Is a Measuring Instrument

Part II investigates the nature of human judgment and explores how to measure accuracy and error. It discusses how human decisions are susceptible to both bias and noise. This part makes an interesting point:” judgment can therefore be described as measurement in which the instrument is a human mind. Implicit in the notion of measurement is the goal of accuracy—to approach truth and minimize error.”

4. Matters of Judgment

This chapter presents a case study about CEO selection as an example of the judgment process overloaded with relevant and irrelevant information. First, it offers the idea of internal signal:” The essential feature of this internal signal is that the sense of coherence is part of the experience of judgment. It is not contingent on a real outcome. As a result, the internal signal is just as available for nonverifiable judgments as it is for real, verifiable ones.” Further, it reviews ways to evaluate judgment even if results are often inconclusive. It also discusses the value of consistency and defines noise as an inconsistency that damages the system’s credibility.

5. Measuring Error

This chapter discusses how much bias and noise contribute to error. The main point here is that decision-makers should handle noise as rigorously as bias because it could cause similar levels of damage. This chapter also provides a bit of simple statistical tools relevant for measuring bias and noise.

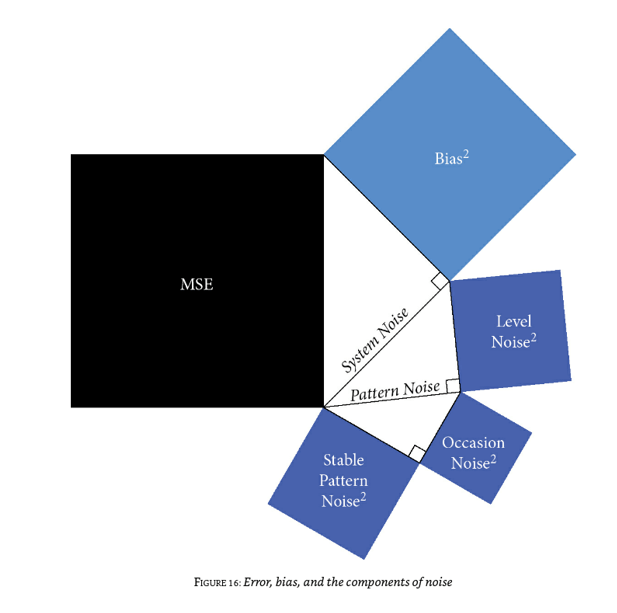

6. The Analysis of Noise

This chapter demonstrates the use of tools to analyze noise in sentencing. It uses the breakdown of the system noise into the Level and the Pattern noises:

- Level noise is variability in the average level of judgments by different judges.

- Pattern noise is variability in judges’ responses to particular cases.

It also gives formula: System Noise2 = Level Noise2 + Pattern Noise2

The conclusion: “Level noise is when judges show different levels of severity. Pattern noise is when they disagree with one another on which defendants deserve more severe or more lenient treatment. And part of pattern noise is occasion noise—when judges disagree with themselves.”

7. Occasion Noise

This chapter discusses the noise from multiple small, difficult-to-measure factors. The repetitive estimates of unknown data demonstrated that the best assessment comes as an average of numerous estimates, with the first being usually closer to the truth. It parallels multiple individual estimates with one estimate by the crowd and finds it correct, naming it “the crowd within.” This chapter also discusses sources of occasional noise: psychological such as mood, gullibility, weather, and so on. The main point is that individuals are not constantly the same, and their behavior and decisions depend on multiple factors. It refers to interesting research demonstrating a 19% drop in granting asylum if the previous two positive asylum hearings. The conclusions are: “Judgment is like a free throw: however hard we try to repeat it precisely, it is never exactly identical.” and “Although you may not be the same person you were last week, you are less different from the ‘you’ of last week than you are from someone else today. Occasion noise is not the largest source of system noise.”

8. How Groups Amplify Noise

This chapter reviews group decision-making and finds it even noisier than individual decision-making. It occurs due to an increase in number and influence of irrelevant factors:” Who speaks first, who speaks last, who speaks with confidence, who is wearing black, who is seated next to whom, who smiles or frowns or gestures at the right moment.” The chapter reviews groups’ music downloads, various referenda, and web comments in the UK and the USA. The chapter also discusses informational cascades when a slight change in the sequence of presentations creates a path-dependent dynamic of support to one decision. The final part of the chapter discusses group polarization when one idea initially gets incrementally higher support than others later, resulting in increasingly higher support when people rush to join the majority. It generally leads to higher levels of noise and errors. The conclusion:” Since many of the most important decisions in business and government are made after some sort of deliberative process, it is especially important to be alert to this risk. Organizations and their leaders should take steps to control noise in the judgments of their individual members.”

Part III: Noise in Predictive Judgments

Part II explores predictive judgment, the use of rules and algorithms, and the superiority of these methods over humans in predictive power.

9. Judgments and Models

This chapter compares the accuracy of predictions made by professionals, by machines, and by simple rules. The conclusion is that the professionals come third in this competition. The chapter compares the new employee’s performance prediction based on human judgment and formal modeling and algorithms to reach this conclusion. The model beats humans not only in this case but also in clinical predictions. Moreover, it is true not only for formal modeling but also for modeling individual approaches. The model of a person predicts future outcomes better than this person’s judgment.

10. Noiseless Rules

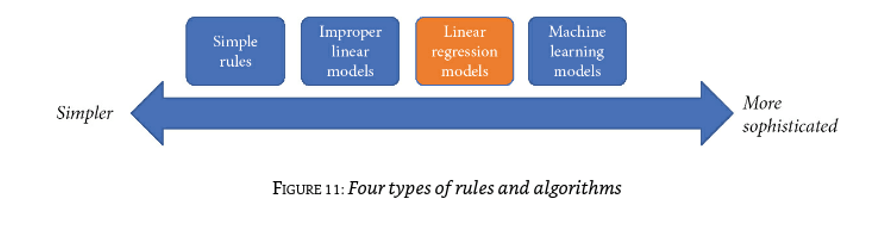

This chapter explores why algorithms are better than experts and shows that noise is a significant factor in human judgment’s inferiority. Predictions are accurate to the extent that prediction matches outcome as measured by the percent concordant (PC). PC of 50% is a random match, and higher means more predictable power. Here is a nice graph for complexity increase:

The chapter analyses this and concludes that, generally, simple rules work better. However, AI machine learning produces even better results. The chapter then reviews an example of better bail decisions. In the end, the chapter discusses the reasons people distrust algorithms and rules.

11. Objective Ignorance

This chapter discusses an essential limit on predictive accuracy: most judgments are made in a state of objective ignorance because many things the future depends on can not be known. The chapter reviews the meaning of objective ignorance in-depth and provides multiple examples from pundits to judges and bail panels. One fascinating point here is the defiance of ignorance and human overconfidence, which adds a lot to the noise, lowering decision-making quality.

12. The Valley of the Normal

Finally, this chapter shows that objective ignorance affects not just an ability to predict events but even the capacity to understand them—an essential part of the answer to the puzzle of why noise tends to be invisible. The chapter also describes a large-scale longitudinal project tracing thousands of children and families over decades, analyzing predictions and outcomes. The result:” The main conclusion of the challenge is that a large mass of predictive information does not suffice for the prediction of single events in people’s lives—and even the prediction of aggregates is quite limited.” In other words, it demonstrated the difference between knowledge based on data and understanding of the situation that could produce a valid prediction. In the end, the chapter provides the following list of the limits of agreement:

- “Correlations of about .20 (PC = 56%) are quite common in human affairs.”

- “Correlation does not imply causation, but causation does imply correlation.”

- “Most normal events are neither expected nor surprising, and they require no explanation.”

- “In the valley of the normal, events are neither expected nor surprising—they just explain themselves.”

- “We think we understand what is going on here, but could we have predicted it?”

Part IV: How Noise Happens

Part IV explores psychological causes of noise, “including personality and cognitive style; idiosyncratic variations in the weighting of different considerations; and the different uses that people make of the very same scales.”

13. Heuristics, Biases, and Noise

This chapter presents three important judgment heuristics on which System 1 extensively relies. It shows how these heuristics cause predictable, directional errors (statistical bias) as well as noise. For example, these errors could be aiming at the same bull’s eye but hitting different spots or aiming at different bull’s eyes but hitting the same place. The authors discuss substitution, conclusion, and other psychological biases. They caution against blaming errors on unspecified biases and distorting evidence to fit prejudgment based on the first impressions. They also suggest that biases common for a group create systemic bias, but if biases are different, it just makes more noise.

14. The Matching Operation

This chapter focuses on matching—a particular operation of System 1—and discusses the errors it can produce. It mainly comes down to the difference in measurement scales when the exact estimate creates errors because of scaling mismatch.

15. Scales

This chapter turns to an indispensable accessory in all judgments: the scale on which the judgments are made. It shows that the choice of an appropriate scale is a prerequisite for good judgment and that ill-defined or inadequate scales are an important source of noise. Here authors provide the formula for measuring noisy scales:

Variance of Judgments = Variance of Just Punishments + (Level Noise) 2 + (Pattern Noise) 2

They also provide a graphic representation for punitive scales:

16. Patterns

This chapter explores the psychological source of what may be the most intriguing type of noise: the patterns of responses that different people have to different cases. Like individual personalities, these patterns are not random and are mostly stable over time, but their effects are not easily predictable. Here is another formula:

(Pattern Noise)2 = (Stable Pattern Noise) 2 + (Occasion Noise) 2

17. The Sources of Noise

This chapter summarizes the previous discussion about noise and its components. It also proposes an answer to the puzzle raised earlier: why is noise, despite its ubiquity, rarely considered an important problem? Here is a combined graphical representation of Mean Square Error (MSE):

Part V: Improving Judgments

Part V explores ways to improve human judgment.

18. Better Judges for Better Judgments

This chapter discusses the characteristics of superior judges. Authors look at such characteristics as Intelligence and Cognitive style. They also discuss the role of true experts, who produce verifiable predictions and respect-experts – people with credentials who make unverifiable statements.

19. Debiasing and Decision Hygiene

This chapter reviews many attempts to counteract psychological biases, with some clear failures and some clear successes. It also briefly reviews debiasing strategies and suggests a promising: asking a designated decision observer to search for diagnostic signs that could indicate, in real time, that a group’s work is being affected by one or several familiar biases. The authors look at Ex Post and Ex Ante debiasing and provide some experimental data on this. They also discuss debiasing limitations. One of the methods they discuss is a decision observer with a checklist to assure proper coverage of biases and decision points. Overall, they suggest strict decision hygiene to decrease both biases and noise.

20. Sequencing Information in Forensic Science

This chapter reviews the case of forensic science, which illustrates the importance of sequencing information. The search for coherence leads people to form early impressions based on the limited evidence available and then to confirm their emerging prejudgment. This makes it important not to be exposed to irrelevant information early in the judgment process. The authors review an example of fingerprint analysis and how various biases and noise impacted its quality. They also stress the need for a second opinion that has to be independent to be meaningful.

21. Selection and Aggregation in Forecasting

This chapter reviews the case of forecasting, which illustrates the value of one of the most important noise-reduction strategies: aggregating multiple independent judgments. The “wisdom of crowds” principle is based on the averaging of multiple independent judgments, which is guaranteed to reduce noise. Beyond straight averaging, there are other methods for aggregating judgments, also illustrated by the example of forecasting. Authors here refer to Tetlock’s “Good Judgment Project” and discuss its mixed results.

22. Guidelines in Medicine

This chapter offers the review of noise in medicine and efforts to reduce it. It points to the importance and general applicability of a noise-reduction strategy previously introduced with the example of criminal sentencing: judgment guidelines. Guidelines can be a powerful noise-reduction mechanism because they directly reduce between-judge variability in final judgments. Here authors pay special attention to psychiatry, the field with deficient levels of consistency between specialists’ judgments.

23. Defining the Scale in Performance Ratings

This chapter turns to a challenge in business life: performance evaluations. Efforts to reduce noise there demonstrate the critical importance of using a shared scale grounded in an outside view. This is an important decision hygiene strategy for a simple reason: judgment entails the translation of an impression onto a scale, and if different judges use different scales, there will be noise. Here authors suggest that the use of a relative scale is more appropriate than absolutes.

24. Structure in Hiring

This chapter explores the related but distinct topic of personnel selection, which has been extensively researched over the past hundred years. It illustrates the value of an essential decision hygiene strategy: structuring complex judgments. By structuring, authors mean decomposing a judgment into its component parts, managing the process of data collection to ensure the inputs are independent of one another, and delaying the holistic discussion and the final judgment until all these inputs have been collected.

25. The Mediating Assessments Protocol

This chapter proposes a general approach to option evaluation called the mediating assessments protocol, or MAP for short. MAP starts from the premise that “options are like candidates” and describes schematically how structured decision making, along with the other decision hygiene strategies mentioned above, can be introduced in a typical decision process for both recurring and singular decisions.

Part VI: Optimal Noise

Part VI explores the proper noise level, considering that it is not possible or even preferable to eradicate it.

26. The Costs of Noise Reduction

This chapter reviews the first two of seven major objections to efforts to reduce or eliminate noise:

- First, reducing noise can be expensive; it might not be worth the trouble. The steps that are necessary to reduce noise might be highly burdensome. In some cases, they might not even be feasible.